AI in medicine: Four paradigms

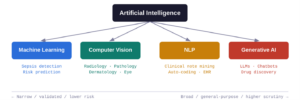

Modern AI in medicine is not a single technology but a family of related approaches. Machine learning in healthcare (ML) trains statistical models on labelled examples to make predictions, powering everything from sepsis-alert systems to drug-interaction flags. Computer vision applies deep neural networks to images and video, enabling pixel-level pattern recognition that rivals (and sometimes surpasses) trained specialists. Natural language processing (NLP) extracts meaning from free text–such as clinical notes, discharge summaries, and scientific literature–and is the backbone of automated coding and literature mining. Finally, generative AI produces new content (text, images, structured data) by analyzing and identifying patterns found in vast sets of training data to build something original. Large language models (LLMs) like GPT-4 and Claude are the most visible examples.

Domain-specific AI: Computer vision in radiology

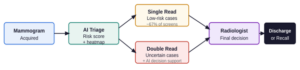

The clearest clinical wins have come from domain-specific vision models applied to medical imaging. Breast cancer screening is a well-studied example. A large prospective multicenter study across 12 German sites (463,094 women, 119 radiologists) found that AI-supported mammography screening increased the cancer detection rate by 17.6% compared with standard double reading, with no significant change in recall rates [1]. Separately, a prospective Korean cohort showed a 13.8% improvement in detection rate when radiologists used AI-based computer-aided detection in single-read settings [2]. While not all studies show a uniform advantage, and radiologists retain superior sensitivity in dense breast tissue, the overall picture is that AI functions as a powerful second pair of eyes, reducing radiologist workload and improving diagnostic accuracy in non-dense tissue [3].

These imaging tools are relatively uncontroversial precisely because they are narrow. Performance is validated against standardized measures (sensitivity, specificity, AUC, cancer-detection rate) with direct comparison to expert radiologists. Regulatory pathways exist, accountability is clear, and the model’s role is augmentative rather than autonomous.

Personalized medicine: The persistent gap between hype and reality

The promise of genetically tailored therapies has been a recurring theme since the completion of the Human Genome Project in the 1990s. Despite enormous investment, truly personalized pharmacogenomics have yielded only a handful of approved applications, with oncology biomarker testing as the clearest success story. AI has revitalized some of these aspirations for the future of medicine: AlphaFold’s protein structure predictions have dramatically shortened the target-identification phase of drug discovery, and ML models are now routinely used in de novo molecular design. But these remain largely pre-clinical tools, and the translational pipeline from computational insight to approved therapy is still measured in years to decades. Like imaging AI, they are domain-specific models with well-defined inputs and outputs and are just operating at molecular rather than pixel scale.

Generative AI in medicine is mostly backstage for now

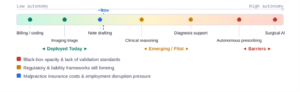

LLMs have found their most concrete medical home in healthcare administration. A 2024 survey found that 84% of large US health insurers were already using AI for some operational purpose, including prior-authorization review, claims coding, and denial letter drafting [4]. Generative AI is particularly well-suited here as it interprets complex payer rules, flags incomplete submissions, and synthesizes clinical documentation into structured billing codes [5]. On the clinical side, applications remain limited. LLMs are used to simplify patient-facing reports, assist with clinical note summarization, and in some centers, draft responses to patient messages. At present, there is no setting in which a generative AI autonomously replaces a physician in a high-stakes diagnostic or therapeutic decision.

Will generative AI replace doctors? The 1 to 5-year outlook

The benchmark numbers among medical education trends are striking: GPT-4 answers 86–90% of USMLE practice questions correctly [6], well above the passing threshold for a licensed physician, and similar results have been obtained on the UK Medical Licensing Assessment [7]. Yet examination performance is a necessary but far from sufficient condition for clinical trust. Current LLMs are, at their core, black-box statistical models. They produce tokens that are probable, given a prompt, not outputs that can be traced to explicit clinical reasoning chains. That opacity makes them fundamentally difficult to validate against the kind of standardized performance measures clinicians and regulators require. Emerging approaches like chain-of-thought prompting, interpretable reasoning traces and model “thinking” features may partially address this, but no consensus framework for certifying a generative AI as fit-for-purpose in clinical decision-making yet exists.

Even if technical barriers were cleared, a second set of constraints would come into play. If AI models reach genuine expert-level performance across broad clinical domains, the employment implications would be profound, and would likely generate significant regulatory and political pressure. Several US states have already passed laws governing AI use by health insurers [8]. Medical malpractice liability for AI-driven decisions remains legally unresolved: Who bears responsibility when a model makes a harmful recommendation? Insurance costs for AI services in high-stakes settings could substantially erode any economic advantage. Taken together, the realistic short-term trajectory is continued expansion of AI as a digital health support layer for tasks like handling documentation, coding, triage and imaging analysis, while autonomous clinical decision-making remains the preserve of licensed physicians, backed by the accountability structures that medicine has developed over centuries.

Clinicians with AI readiness will lead

The most likely short-to-mid-term scenario is not a sudden handover of clinical responsibility to AI systems, but an acceleration of the current trend where physician-led AI takes over well-defined, high-volume tasks while physicians themselves retain ownership of judgment-intensive, relationship-dependent care. Clinical skills like history-taking, physical examination, patient communication and contextual reasoning will become comparatively more valuable as medical AI absorbs the rote and the routine. The physicians best positioned to thrive in this environment will be those who can not only use AI tools, but also critically analyze and understand their performance metrics, identify failure modes, and support their appropriate and safe integration into practice. The technology will keep advancing, so understanding it is no longer optional, but a requirement.

Key References

1 Eisemann N et al. “Nationwide real-world implementation of AI for cancer detection in population-based mammography screening.” Nature Medicine (2025). doi:10.1038/s41591-024-03408-6

2 Lee et al. “Artificial intelligence for breast cancer screening in mammography (AI-STREAM).” Nature Communications (2025). doi:10.1038/s41467-025-57469-3

3 Seker ME et al. “Evaluating the performance of AI and radiologists accuracy in breast cancer detection across breast densities.” ScienceDirect (2025). doi:10.1016/S3050577125000118

4 Mello MM & Rose S. “Denial — artificial intelligence tools and health insurance coverage decisions.” JAMA Health Forum 5:e240622 (2024).

5 Medical Economics. “2025 state of claims: when AI tools work best.” April 2025.

6 Mihalache A et al. “ChatGPT-4: an assessment in the United States Medical Licensing Examination.” Medical Teacher 46(3):366–372 (2024). doi:10.1080/0142159X.2023.2249588

7 Scientific Reports. “Assessing ChatGPT 4.0’s Capabilities in the UK Medical Licensing Examination (UKMLA).” Scientific Reports (2025). doi:10.1038/s41598-025-97327-2

8 Stateline. “Patients deploy bots to battle health insurers that deny care.” November 2025.